I'm trying to train my dog not to jump on the bed while I sleep. Up until recently, we've had him sleep in the crate at night, but ideally we'd be able to give Bennie a bit more freedom. He's allowed to hang out with us on the bed during the day, so it's surely confusing that he can't at night. He also doesn't understand English, so we can't just explain to him the rules!

To teach him the rules, we have to leave the crate door open at night, wait for him to jump on the bed, and then kick him off. We need to show him how his behavior is interpreted by the rules of the system.

In GuidedTrackGuidedTrack

GuidedTrack is a simple low-code application that allows you to make surveys, experiments, web applications, online courses, signup forms, and more. Spark Wave built GuidedTrack so that it could build advanced studies and rapidly prototype, and is making the software available more broadly.

Alternative study platforms like Qualtrics and Typeform required so much clicking and dragging that they were tedious and took forever to build. Additionally, it was difficult to package the study itself ..., we started off with a very different onboarding plan than what we have currently. The first iteration (before I started working with them) loaded each new account with sample programs that were very well commented to explain precisely what each function did. The second iteration (after I started working with them) had "competencies," which were essentially worksheets that would walk you through the steps of creating various types of programs, like a survey, experiment, or interactive lesson. These were also precisely commented to describe each step the user would take and the mechanics of what they were doing. One of the ultimate ideas behind the competencies is that they would place help documentation into the context of mini-projects, and people would be able to find those projects efficiently.

When we started doing user interviews, we noticed a few things.

- It didn't seem to matter how precise our language was. There would always be misunderstandings.

- Nonprogrammers would look at heavily commented code and be intimidated. It was almost like the fact that there were so many comments sent a signal that there was a lot to learn. Additionally, the comments broke up the code, making it more difficult to grasp the bigger picture.

- Many people just skipped this optional instructional material.

We ultimately realized that both approaches sample programs and the competency system were explainers.

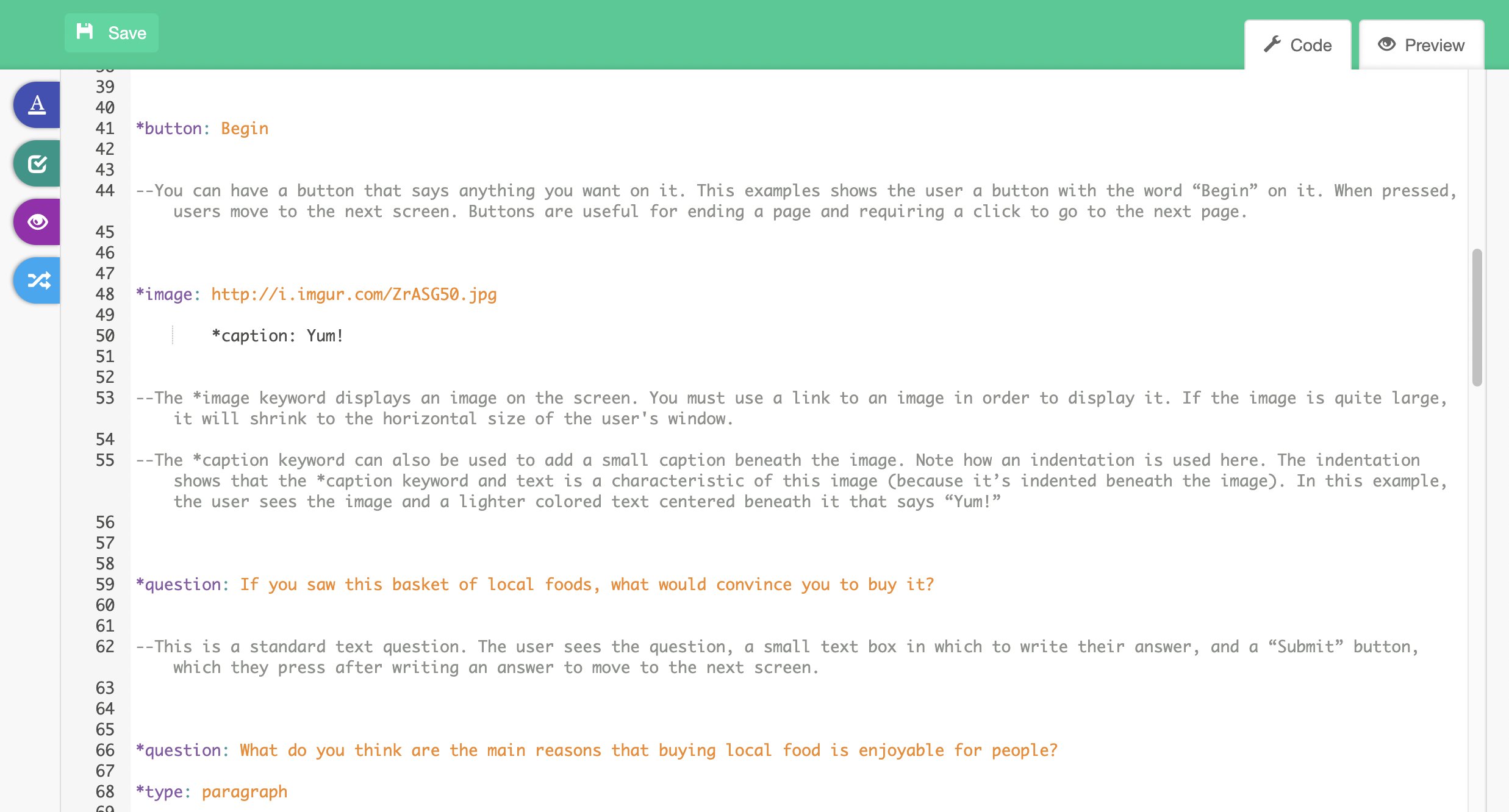

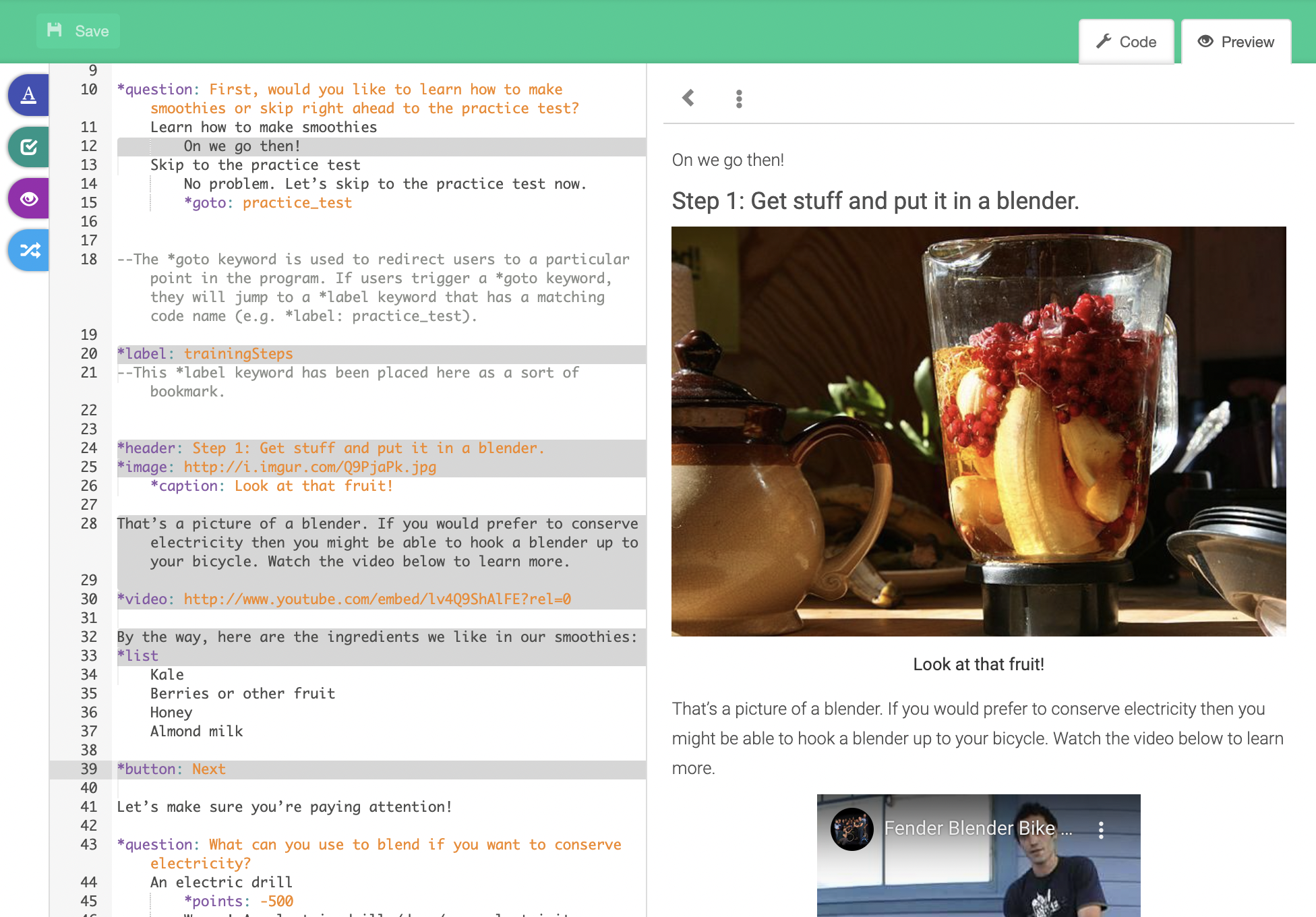

When we decided to focus on more fundamental feedback loops to make GuidedTrack inherently more learnable, we realized through user interviews that we didn't need to rely so much on explainers. With our side-by-side preview/debugger, we were literally able to remove comments and make something that is easier to understand and less intimidating. Our starter programs were suddenly self-explanatory. Compare the two images below of the same program:

A side-by-side preview tightened the feedback loop between behavioral inputs (writing code) and viewing the output, allowing the user to gain a mental modelmental model

for how GuidedTrack transforms their inputs into outputs. What's more? As long as you're using GuidedTrack, that feedback loop is unavoidable. More usage naturally leads to better understanding.

For more information on the GuidedTrack onboarding plan, see Onboarding Plan as Presented to GuidedTrack on 10-20-20Onboarding Plan as Presented to GuidedTrack on 10-20-20

Note - GuidedTrack gave me permission to share a plan for onboarding that I wrote for them late October. At this point, we had been working together for a few months and this is an iteration on plans we were already working on. Certain aspects have been updated (which I mark with UPDATE) and certain aspects have been added (not in this post, will likely come in future posts), but we're implementing most of this. This post was originally written for the GuidedTrack team, so it assumes some kno...